Projects / Neuroevolution paper

A few years into my mid-career pivot into science, I just landed my first published paper! What started as an idea I wrote on a napkin at the Cosyne neuroscience conference is finally out in Nature Communications: “Complex computation from developmental priors”www.nature.com.

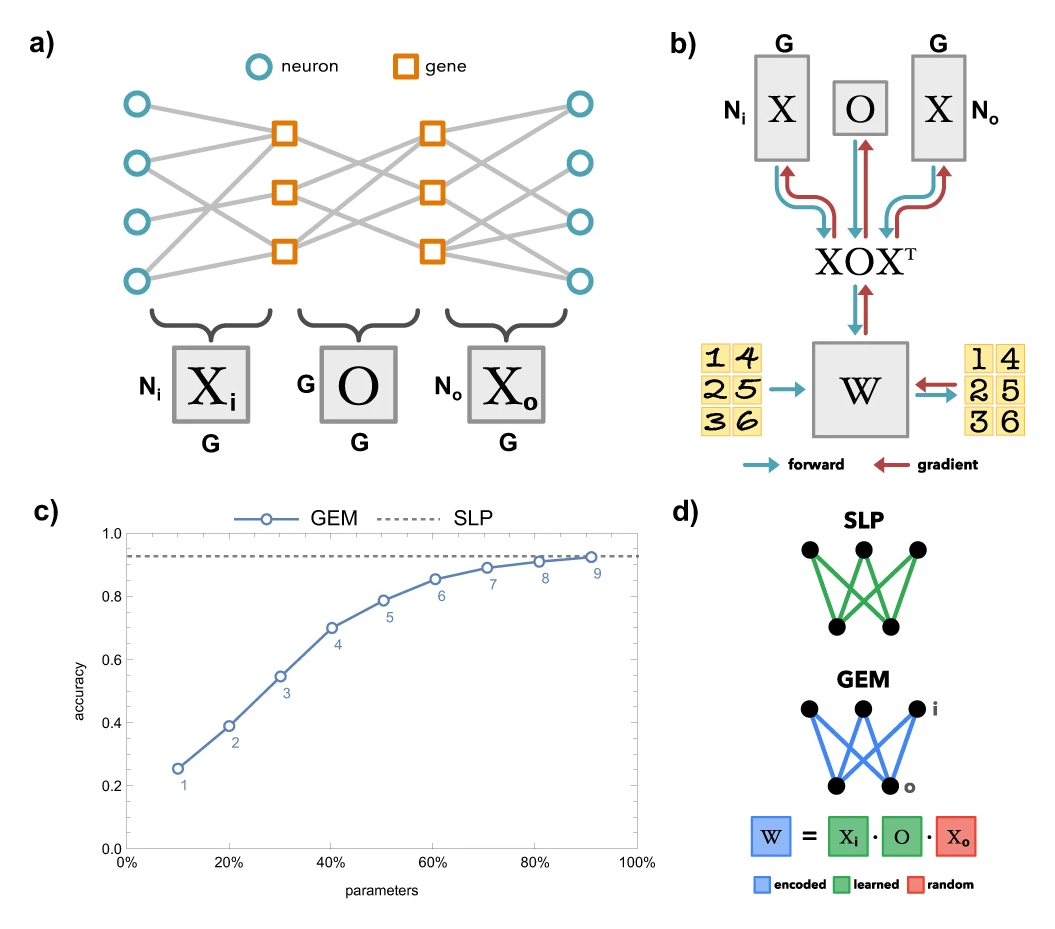

In the paper, my neuroscience collaborator Dániel and I describe how to cast neuroevolution as a metalearning problem, by treating his XOX model of connectome generation as a differentiable step in the two-stage optimization process: an outer optimization loop, which models evolution, optimizes the X, O, Y matrices (representing genetic factors) that establish connectivity matrices (representing the connectome), and an inner optimization loop, modeling organism learning, optimizes the resulting connectivity matrices. This models how evolution optimizes brain connectivity in order to promote rapid in-lifetime learning.

For a slightly longer summary you can read Dániel’s twitter thread heretwitter.com.

Some backstory: I met Dániel Barabási at the Neuroscience Imbizo in 2019, and he told me about his “XOX” model of how gene expression in different neuron types could establish broad-scale connectivity patterns in the developing brain. Later, while attending the Cosyne neuroscience conference, we bumped into eachother, and I realized there was a simple way to recast the evolutionary problem of designing particular neural connectivity to make an organism capable of rapid learning as a metalearning problem in which backpropogation itself could be applied to optimize the matrices that establish the gene expression templates. Later we met up in Hungary and spent a week enjoying the public baths, coffee shops, and hacking on our model together. Fast forward a few years and the paper had picked up a couple co-authors and successfully wound itself through the complexities of cross-disciplinary peer review. I wrote the code, ran the experiments, and most of the diagrams, Dániel and co-authors the prose.